Hello!

We Are Haptic Vision

We are building a real-time AI navigation rig that translates your surroundings into four simultaneous sensory modalities. Entirely local. Zero latency.

Sensory Awareness

Haptic Feedback

3 independent vibrating motors pulse with intensity proportional to obstacle proximity.

Spoken Alerts

Priority-ranked spatial audio for critical warnings like "Car ahead, 0.9m".

Facial Recognition

Registered people are identified and announced by name and direction.

AI Scene Narration

On-device Google Gemma 4 provides natural language descriptions of your environment.

The Local-First Stack

NVIDIA Jetson Orin NX

Deep learning & computer vision at the edge with maximum performance.

ZED 2i Stereo Camera

Industrial-grade depth sensing that works in any lighting condition.

Arduino Uno

Low-latency control for 3-point haptic motors and sensory arrays.

Google Gemma 4

SOTA scene narration running entirely on-device without internet.

Intelligent Perception

Visual Engine

The ZED 2i stereo camera captures depth and motion at 1080p, detecting everything from stairs to low overheads up to 8m away.

Edge Intelligence

Powered by NVIDIA Jetson, our on-device models recognize faces and objects in real-time without ever needing the cloud.

Haptic Mapping

A 3-zone vibration belt translates distance into intuitive pulses. Left, center, and right zones keep you centered and safe.

Smart Routing

Record familiar routes once to receive waypoint-based navigation with combined haptic and audio turn-by-turn cues.

Advanced Spatial Awareness

Haptic Vision is a localized wearable system that translates your surroundings into four simultaneous sensory modalities. No cloud. No latency.

Core Perception

- Sub-Meter Depth: 1080p/30fps mapping out to 8 meters.

- Threat Detection: Dual YOLO AI models identify hazards in real-time.

- Face Recognition: Depth-verified "True Liveness" identity security.

Haptic & Audio

- Scaled Haptics: Natural-feeling vibrations via Weber-Fechner scaling.

- Dynamic Zones: Warning range scales automatically to your walking speed.

- Pre-emptive Audio: Priority-queued alerts interrupt to deliver critical warnings.

Sensor Fusion

- A* Navigation: Turn-by-turn routing for familiar spatial graphs.

- Heading Lock: Magnetometer-anchored haptic drift correction.

- Fall Detection: Barometric pressure and IMU-based impact detection.

No Cloud. No Internet.

Haptic Vision processes everything locally on your backpack. Your visual data never leaves the device, ensuring total privacy and maximum reliability in any environment.

Meet the team48 Hours of Innovation

The Vision at CSUMB

We arrived at the CSUMB Hackathon 2026 with our only idea: Make device to help visually impaired individuals.

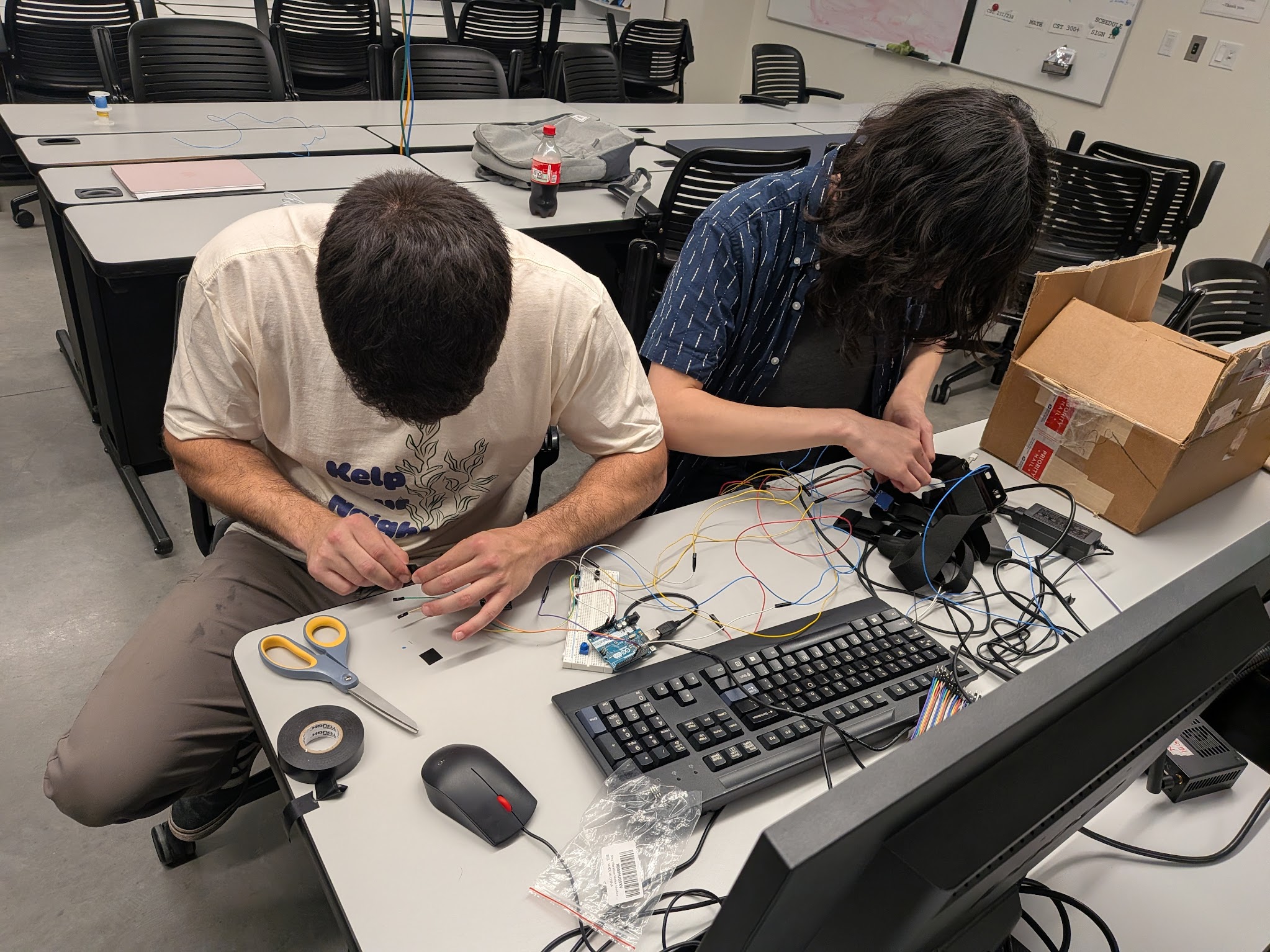

Arduino and Motors

The Arduino was succesfully sending PWM signals to the vibrating actuators in just a few minutes. And a USB interface was developed so the Jetson could send commands to the Arduino.

Zed Depth Detection

The Zed 2i camera could now detect nearby objects and deterime the correct signals to send to the Arduino.

Jetson and Camera Integration

The Jetson Orin NX could successfully use the Zed 2i camera to detect objects in front of it and it could interface with the Arduino to control the haptics.

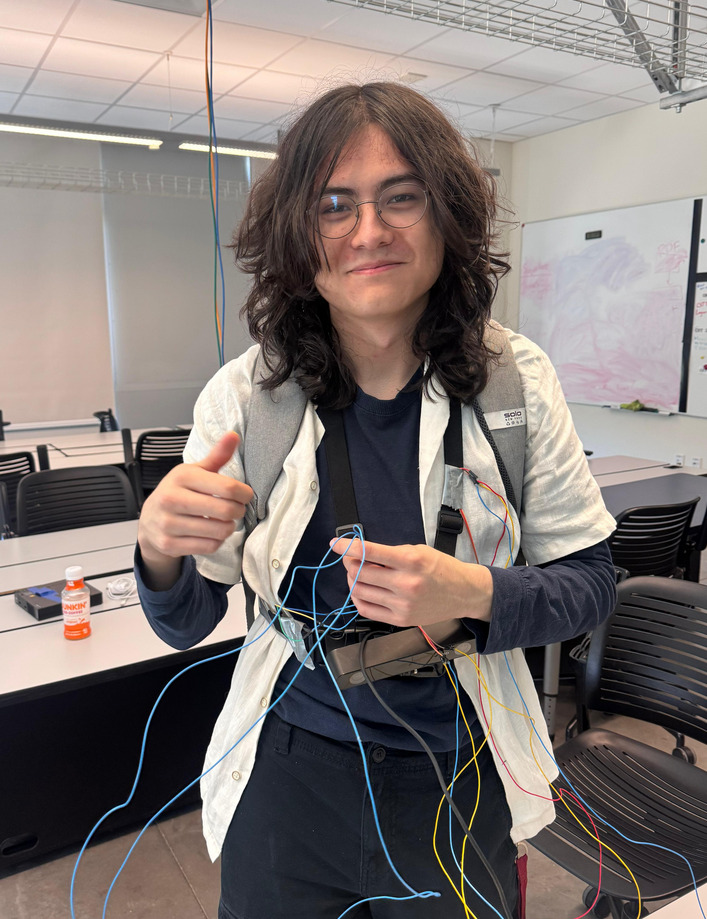

Wiring the Vest

By far the most tedious part. The end of the Hackathon was getting closer, and we had our fingers crossed for the final day since we had much less time.

Gemma 4 Inferencing

We had wrapped up almost everything. We finished wiring up the vest and we added an inferencing model, Gemma 4, to provide intelligent scene narration.

Best Tech Award

Finishing off the Hackathon with the "Best Tech" award!

Let's Help Those That Need It Most

Interested in Haptic Vision? We'd love to hear from you.

The Team Interview

Listen in as the creators of Haptic Vision discuss their 48-hour journey at the CSUMB Hackathon, the inspiration behind the technology, and how they brought edge AI and sensor fusion together. This interview took place post-Hackathon at a separate presentation at Monterey Peninsula College (MPC).

Back to top